Mapping AI Readiness In R&D: Why It Matters

Artificial intelligence is transforming how scientific work is conducted. It can accelerate discovery cycles, deepen analysis, and shift how data and experimentation interact. Even with that potential, adoption of AI across research environments is uneven. Some organizations are integrating AI directly into lab workflows, while others are still building basic literacy, clarifying AI’s role, or exploring limited pilot applications.

BHDP gathered insights from six scientific organizations across life sciences, analytical chemistry, consumer product development, and early-stage innovation, revealing a wide spectrum of readiness. Organizational differences are shaped as much by culture, data practices, digital fluency, and spatial constraints as by technology itself. R&D environments also introduce unique complexities, including the integration of instruments and equipment, intricate workflows, multidisciplinary teams, and stringent quality or regulatory requirements.

Across all of this, one takeaway stands out: AI maturity is not simply a technology milestone. It is a socio-technical evolution that depends on aligning people, processes, and place.

AI Is Transforming Scientific Work—But Adoption Is Uneven

As scientific work becomes more data-intensive and computationally supported, laboratory environments must evolve to enable digital visibility, cross-functional collaboration, workflow orchestration, reliable data capture, and faster cycles of experimentation. Understanding where an organization sits on this continuum helps clarify which barriers matter most, and it highlights the strategic and spatial interventions that can accelerate progress.

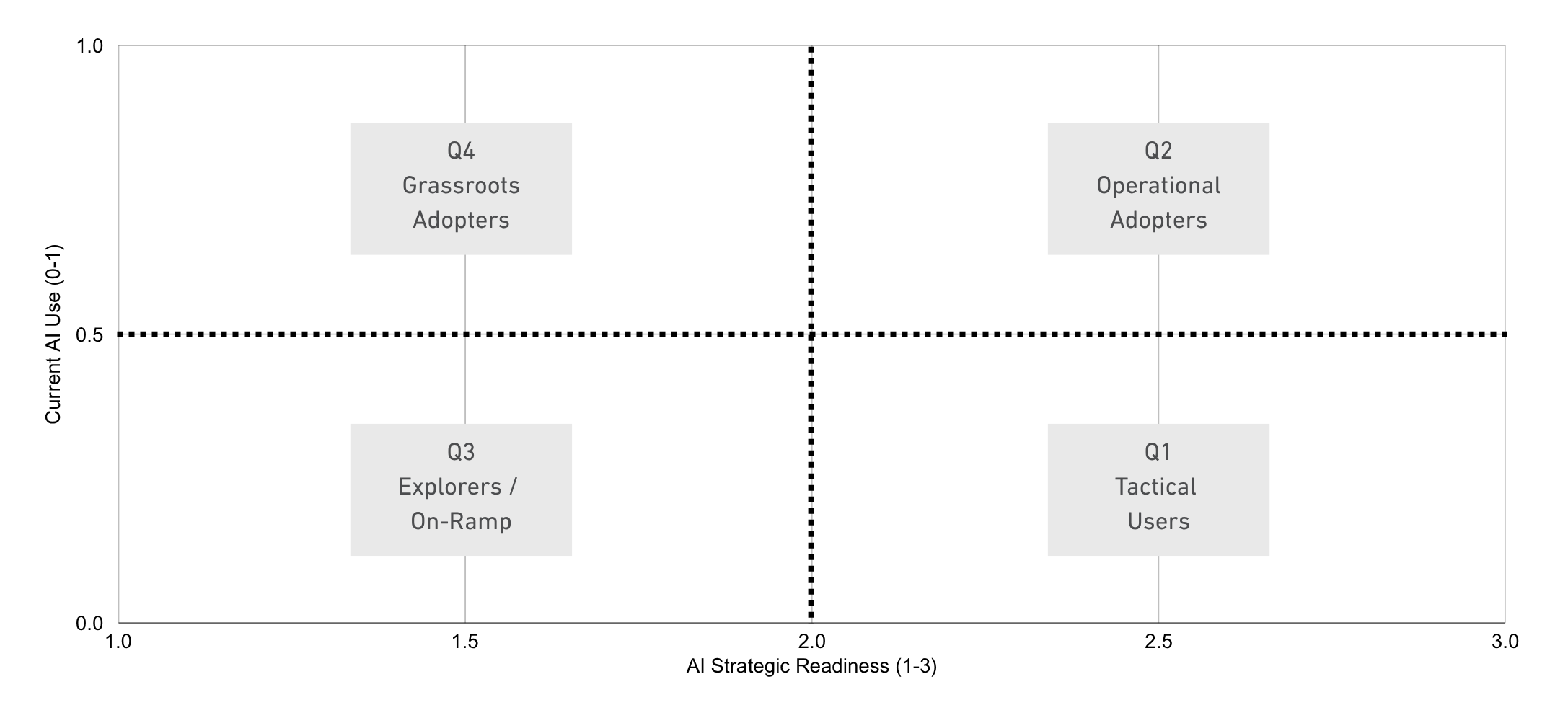

To support that level of clarity, BHDP developed a practical AI-readiness framework. This quadrant-based model maps readiness and use across R&D organizations.

How BHDP Built the AI Readiness Framework

BHDP gathered insights from 11 individuals across six organizations spanning life sciences, analytical labs, consumer product R&D, and early-stage innovation teams. The input combined structured survey questions with open-ended responses, giving both a quantitative snapshot and deeper context for how AI is actually used, understood, and anticipated in lab settings.

The survey focused on three core dimensions of maturity:

- Whether AI is currently part of lab workflows

- How ready organizations are to invest in AI-driven transformation

- Where AI is being applied (from data mining and predictive analytics to image analysis and workflow automation)

Responses were translated into simple ordinal scores, enabling consistent comparisons and positioning across the organizations.

Methods And Dataset Overview

To compare organizations, BHDP coded responses into scores:

- Current AI Use: Yes = 1, No = 0, Not sure = 0.5

- AI Readiness: Responses ranged from 2 (“Exploring options”) to 3 (“Currently investing”)

- Application Breadth: count of AI domains selected (0–6)

Scores were averaged to create a composite view of current use, strategic readiness, and application scope. Open-ended responses were analyzed for recurring themes, including workflow acceleration, data-driven decision-making, integration challenges, capability needs, and concerns about trust and interpretability. Quantitative scores determined quadrant placement, while qualitative patterns helped explain how organizations with similar scores might behave differently in practice.

How The Scoring Model Works

The scoring model creates a structured way to compare organizations and reveal patterns across the dataset. It also supports two complementary views:

- A quadrant table that assigns each organization into a typology based on composite scores

- A visual AI maturity map that plots organizations along continuous axes (readiness and use), with bubble size indicating application breadth

Together, these views provide both diagnostic classification and context for how organizations relate to one another across the adoption landscape.

What We’re Seeing Across R&D Organizations

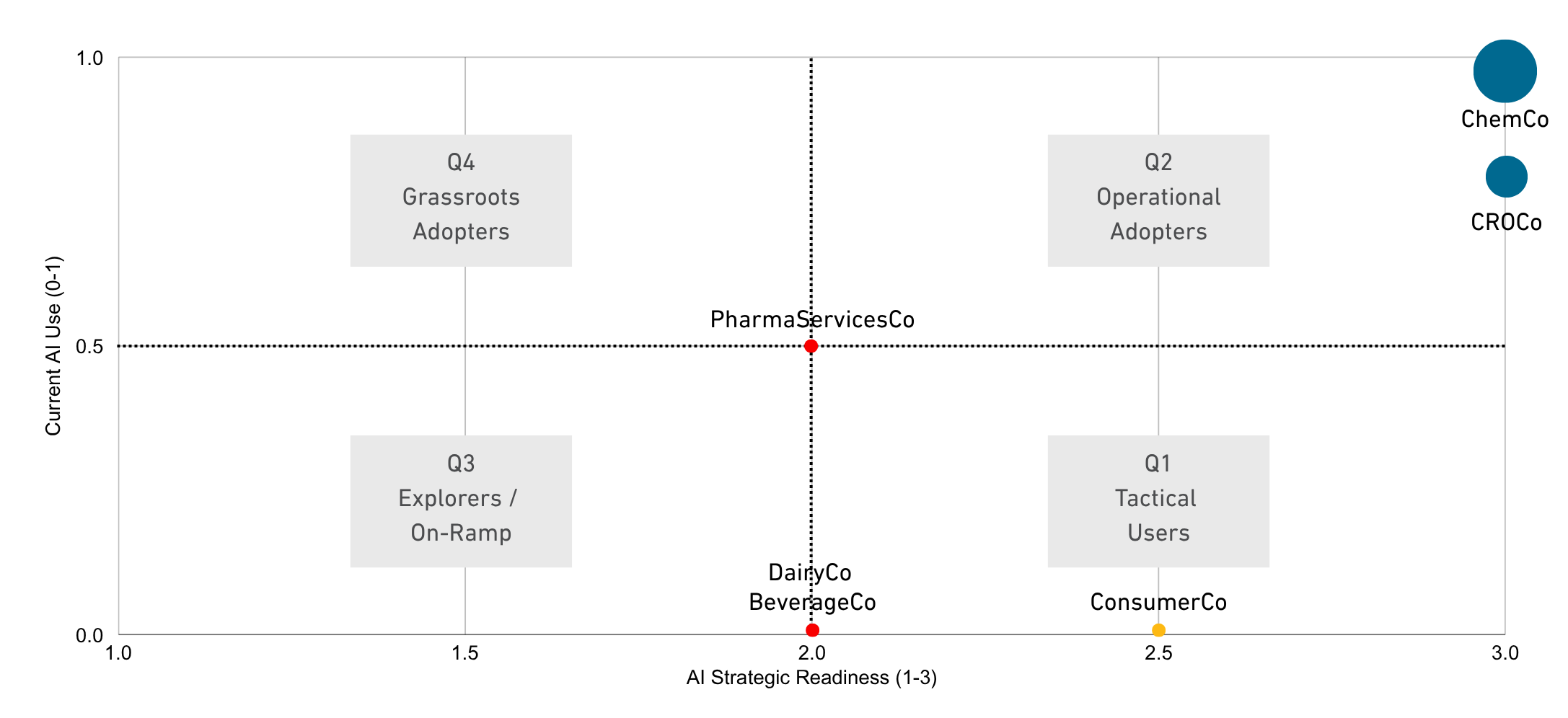

BHDP’s merged dataset includes six organizations represented by 11 respondents: three from ChemCo, three from CROCo, two from ConsumerCo, and one each from BeverageCo, DairyCo, and PharmaServicesCo. While the sample is exploratory, it reveals clear directional patterns and a meaningful maturity gradient, ranging from active implementation to early-stage exploration.

Current Uses Of AI

Across respondents, 45.5% report AI is already being used in at least some lab workflows. Another 36.3% indicate AI is not currently in use, and 18.2% are not sure. This distribution suggests that adoption is happening in pockets rather than uniformly.

The “not sure” responses are especially revealing. In some environments, AI may be embedded in vendor tools or software solutions in ways that aren’t fully visible to lab staff. This lack of visibility can make it harder to build a shared understanding of what the AI is doing today and what it could do next for a given organization.

Strategic Readiness to Invest

When asked about readiness to invest in AI-driven lab transformation, 60% of respondents indicate their organization is currently investing, while 40% report they are exploring options. No respondents selected “not ready” or “no plans.” This suggests that even organizations with little or no current AI use are, at a minimum, conceptually open to AI as a future direction.

At the same time, readiness does not automatically translate into operational change. This tension becomes more visible when examining how and where AI is being applied.

AI Application Areas

Although strategic interest appears relatively strong, the breadth of concrete application remains limited. Structured-response data shows AI usage clustering in a small set of domains. Data mining is the most frequently selected application area (27.3%), followed by predictive analytics (18.2%). Image analysis and workflow automation were each selected by 9.1% of respondents.

ChemCo is the clearest example of a multi-domain AI footprint in the structured items. CROCo’s AI activity emerges more strongly in open-ended comments than in checkbox selections. This suggests experimentation may be occurring in ways that aren’t yet fully captured by formal categories or internal reporting structures.

Barriers To AI Adoption

Across organizations, three barriers appear most frequently:

- Integration with existing systems,

- Lack of internal expertise, and

- Trust/interpretability.

These barriers reflect the realities of introducing AI into complex socio-technical lab ecosystems—not simply adopting a new tool.

Integration With Existing Systems

Integration challenges are cited by eight of the 11 respondents (72.7%). Connecting AI tools to legacy systems, instruments, and data repositories remains one of the biggest obstacles. In lab environments, AI cannot operate in a vacuum. It must connect to real equipment, real data sources, and real workflows without introducing friction or uncertainty.

This challenge becomes especially visible when organizations move beyond concept and into implementation. The complexity of systems, workflows, and handoffs becomes difficult to ignore.

Lack Of Internal Expertise

A lack of internal expertise is cited by 54.5% of respondents. They point to gaps in skills and capacity that limit an organization’s ability to design, deploy, and maintain AI-enabled workflows. Capability gaps can slow progress even when an organization is motivated and investing in AI.

In practice, this often shows up as difficulty moving from early experimentation to consistent use across teams. It can also contribute to uneven adoption, where a few individuals or groups advance while others remain uncertain.

Trust And Interpretability

Trust and interpretability concerns are raised by 45.5% of respondents. This reflects discomfort with relying on outputs from models that scientists cannot fully inspect or explain. Especially in high-stakes scientific decisions, confidence in results—and clarity about how results were produced—matters.

Interestingly, leadership buy-in, cost, and regulatory concerns appear less frequently than integration, expertise, and trust. In this dataset, the most pressing challenges are less about “wanting AI” and more about making AI workable, reliable, and usable inside real lab conditions.

The AI Maturity Quadrants

BHDP’s framework is organized into four typologies, based on current AI use in workflows and readiness to support transformative applications: Operational Adopters, Tactical Users, Explorers/On-Ramp, and Grassroots Adapters. Each quadrant represents a distinct mode of progress and a different set of needs.

Q1: Operational Adopters

ChemCo and CROCo represent this quadrant. AI is already integrated into lab functions, including data mining, predictive modeling, automated analytics, and workflow optimization. Their challenges are more advanced: integrating legacy systems, validating models for high-stakes decisions, building competency across teams, and scaling adoption enterprise-wide.

For these organizations, the central question is no longer whether AI belongs in the lab. The focus shifts to governance, data harmonization, and sustaining momentum across functions as AI use expands.

Q2: Tactical Users

ConsumerCo represents this quadrant. Leadership expresses strong interest and invests in AI platforms or infrastructure, yet day-to-day adoption remains limited. The gap is not motivation; it is execution.

These organizations need high-impact use cases to translate enthusiasm into tangible results. They also need mechanisms that connect strategic intent with lab-level practice, turning investment into operational capability.

Q3: Explorers / On-Ramp

BeverageCo, DairyCo, and PharmaServicesCo occupy this quadrant. These organizations are in the earliest stages of maturity, viewing AI as potentially valuable but lacking the capability, exposure, or conceptual grounding to act with confidence.

Their narratives reflect uncertainty and limited clarity around how AI connects to current workflows. For these groups, the earliest steps are education, low-risk pilots, and foundational data readiness.

Q4: Grassroots Adopters

This quadrant was not represented in the dataset, but it remains important for benchmarking. Grassroots Adopters have pockets of bottom-up AI use—through vendor tools, team-level initiatives, or localized experimentation—without a clear enterprise strategy or governance.

The opportunity is to consolidate efforts, create oversight mechanisms, and turn distributed experimentation into coordinated, scalable impact.

Design Strategy Implications By Quadrant

A major insight of the framework is that AI maturity is inseparable from spatial and organizational readiness. As R&D workflows become more data-intensive, laboratory environments must support new adjacencies, cross-disciplinary collaboration, and digital infrastructure that makes AI-enabled work visible, interpretable, and scalable.

Below are the design implications BHDP identified for each quadrant.

Q1: Operational Adopters (High Readiness, High Use)

Organizations already integrating AI at scale benefit from environments that support optimization and enterprise-wide harmonization:

- Build infrastructure for high-throughput AI workflows (automation-ready spaces, high-bandwidth networks, edge processing, compute adjacency)

- Co-locate teams (data scientists, lab technicians, automation engineers) to shorten cycles between analysis and experimentation

- Centralize dashboards and orchestration hubs to monitor AI operations

- Create agile zones for rapid prototyping, workflow iteration, and tool testing

- Support human–AI collaboration with spaces for interpretation, scenario analysis, and review

Strategically, the recommendation is to standardize workflows, invest in enterprise-scale infrastructure, formalize AI governance, and integrate human decision-making spaces that allow AI to scale with confidence.

Q2: Tactical Users (High Readiness, Low Use)

For organizations working to translate strategic interest into everyday practice:

- Flexible “sandbox” labs for pilot projects with modular benches and digital plug-and-play setups

- Visible digital workflows (dashboards, analytics pipelines, prototyping zones)

- Cross-functional spaces connecting IT, analytics, and lab teams

- Infrastructure planning for future scaling (power, data routing, environmental stability)

- Training-forward rooms for onboarding, hands-on workshops, and AI literacy

Strategically, the recommendation is to launch a few high-impact pilots, build adoption teams that pair lab and digital experts, strengthen data readiness, and communicate a clear narrative linking AI to organizational goals.

Q3: Explorers / On-Ramp (Low Readiness, Low Use)

For early-stage organizations that need education and confidence-building:

- Introductory digital infrastructure (standardized data capture, device connectivity, accessible workstations)

- Flexible, discovery-oriented lab layouts with moveable equipment and rapid reconfiguration

- High-visibility learning spaces to showcase AI demos and vendor tools

- Early support for data maturity (metadata, basic analytics, clean workflows)

- Collaborative, curiosity-driven spaces (lounges, brainstorming zones, pilot rooms)

Strategically, the recommendation is to build AI literacy, identify early use cases, run low-risk pilots, and create cross-functional, early-adopter teams to develop confidence and capability.

Q4: Grassroots Adopters (Low Readiness, High Use)

For organizations with bottom-up AI activity but limited strategy:

- Centralized spaces for shared AI tools, analytics hubs, or “AI collaboratories”

- Governance-supportive layouts to align standards, validation, and data integrity

- Consolidation of digital and physical tools to reduce fragmentation

- Spaces for vendor integration, testing, and knowledge transfer

- Leadership visibility through insight walls, project galleries, and review areas

Strategically, the recommendation is to map and elevate existing pockets of success, establish light governance, connect dispersed adopters, and begin building a coherent enterprise AI vision.

Cross-Quadrant Design Principles

Across all maturity levels, several principles apply:

- Digitally Native Planning: Design for seamless data flow, computation, storage, and visualization

- Human-Centric Integration: Emphasize interpretation, judgment, and interdisciplinary collaboration

- Systems-Level Infrastructure: Make networking, environmental controls, and interoperability foundational

- Learning, Testing, Scaling Zones: Provide safe spaces for experimentation and adoption

- Translational Interfaces: Bridge lab workflows, digital processes, and leadership decision-making

AI Maturity Is Socio-Technical

This analysis highlights an R&D landscape in which organizations are actively navigating the opportunities and constraints of AI-enabled lab environments. Adoption does not follow a uniform trajectory. Instead, organizations cluster into distinct maturity profiles shaped by operational realities, digital fluency, and internal confidence in AI’s role in scientific work.

The quadrant model offers a practical roadmap: it helps organizations understand where they are, what barriers matter most, and which design and operational interventions will produce the greatest impact. Across all quadrants, the desire is consistent—faster insight generation, clearer understanding of AI’s role in lab contexts, and stronger internal capabilities to interpret and validate AI outputs. At the same time, integration challenges, data fragmentation, and limited training continue to slow progress, even in organizations with high strategic commitment.

From a design-strategy perspective, AI maturity is inseparable from spatial and organizational readiness. As lab workflows become increasingly data-intensive, the physical and digital workplace becomes a critical lever for enabling transformation. By aligning AI strategy, human capability, and the built environment, organizations can move toward more adaptive, resilient, and insight-driven futures—where AI is not just an aspiration, but an operational asset embedded in scientific practice.

BHDP Can Help Make AI-Ready R&D Environments Real

If your organization is investing in AI but your lab environments, workflows, and infrastructure aren’t set up to support it, you’re leaving adoption to chance.

Using our AI Maturity Quadrant Framework, BHDP can help you evaluate AI readiness across your systems and develop a design strategy to build laboratory environments that support your teams and work.

Reach out to BHDP today to start building your laboratory of tomorrow.

Author

Content Type

Date

March 24, 2026

Market

Practice

Topic

Laboratory Design

Technology